This blog series is part of the joint collaboration between Canonical and Manceps. Check out Part Two here.

Kubeflow Pipelines are a great way to build portable, scalable machine learning workflows. It is one part of a larger Kubeflow ecosystem which aims to reduce the complexity and time involved with training and deploying machine learning models at scale.

In this blog series, we demystify Kubeflow pipelines and showcase this method to produce reusable and reproducible data science. 🚀

We go over why Kubeflow brings the right standardization to data science workflows, followed by how this can be achieved through Kubeflow pipelines.

In part 2, we will get our hands dirty! We'll make use of the Fashion MNIST dataset and the Basic classification with Tensorflow example, and take a step-by-step approach to turn the example model into a Kubeflow pipeline so that you can do the same.

1. Why use Kubeflow?

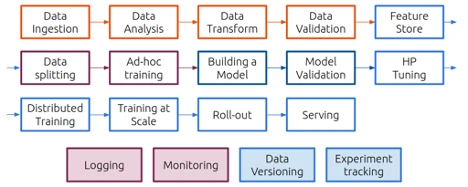

A machine learning workflow can involve many steps and keeping all these steps in a set of notebooks or scripts is hard to maintain, share, and collaborate on, which leads to large amounts of “Hidden Technical Debt in Machine Learning Systems”.

In addition, it is typical that these steps are run on different systems. In an initial phase of experimentation, a data scientist will work at a developer workstation or an on-prem training rig, training at scale will typically happen in a cloud environment (private, hybrid, or public), while inference and distributed training often happens at the Edge.

Containers provide the right encapsulation, avoiding the need for debugging every time a developer changes the execution environment, and Kubernetes brings scheduling and orchestration of containers.

However, managing ML workloads on top of Kubernetes is still a lot of specialized operations work which we don't want to add to the data scientist's role. Kubeflow bridges this gap between AI workloads and Kubernetes, making MLOps more manageable.

2. What are Kubeflow Pipelines?

Kubeflow pipelines are one of the most important features of Kubeflow and promise to make your AI experiments reproducible, composable, i.e. made of interchangeable components, scalable and easily shareable.

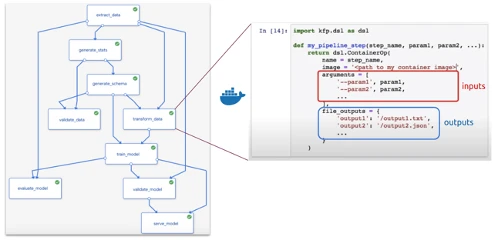

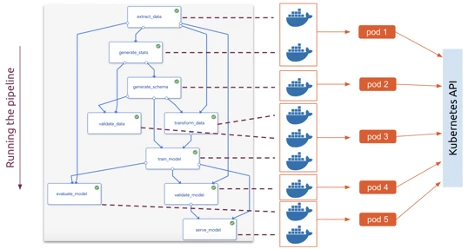

A pipeline is a codified representation of a machine learning workflow, analogous to the sequence of steps described in the first image, which includes components of the workflow and their respective dependencies. More specifically, a pipeline is a directed acyclic graph (DAG) with a containerized process on each node, which runs on top of argo.

Each pipeline component, represented as a block, is a self-contained piece of code, packaged as a Docker image. It contains inputs (arguments) and outputs and performs one step in the pipeline. In the example pipeline, above, the transform_data step requires arguments that are produced as an output of the extract_data and of the generate_schema steps, and its outputs are dependencies for train_model.

Your ML code is wrapped into components, where you can:

-

Specify parameters – which become available to edit in the dashboard and configurable for every run.

-

Attach persistent volumes – without adding persistent volumes, we would lose all the data if our notebook was terminated for any reason.

-

Specify artifacts to be generated – graphs, tables, selected images, models – which end up conveniently stored on the Artifact Store, inside the Kubeflow dashboard.

Finally, when you run the pipeline, each container will now be executed throughout the cluster, according to Kubernetes scheduling, taking dependencies into consideration.

This containerized architecture makes it simple to reuse, share, or swap out components as your workflow changes, which tends to happen.

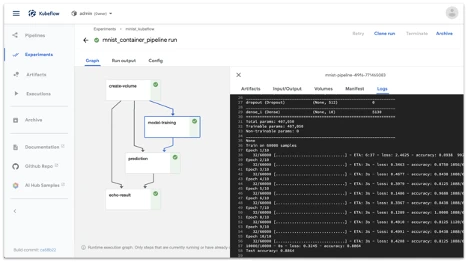

After running the pipeline, you are able to explore the results on the pipelines UI on the Kubeflow dashboard, debug, tweak parameters, and create more “runs”.

In the next post, we will create the pipeline you see on the last image using the Fashion MNIST dataset and the Basic classification with Tensorflow example, taking a step-by-step approach to turn the example model into a Kubeflow pipeline, so that you can do the same to your own models.

Continue to Part 2 →

This blog series is part of the joint collabration between Manceps and Canonical. Visit our AI consulting page to learn more.